卓越的传感器融合

实现更安全的导航

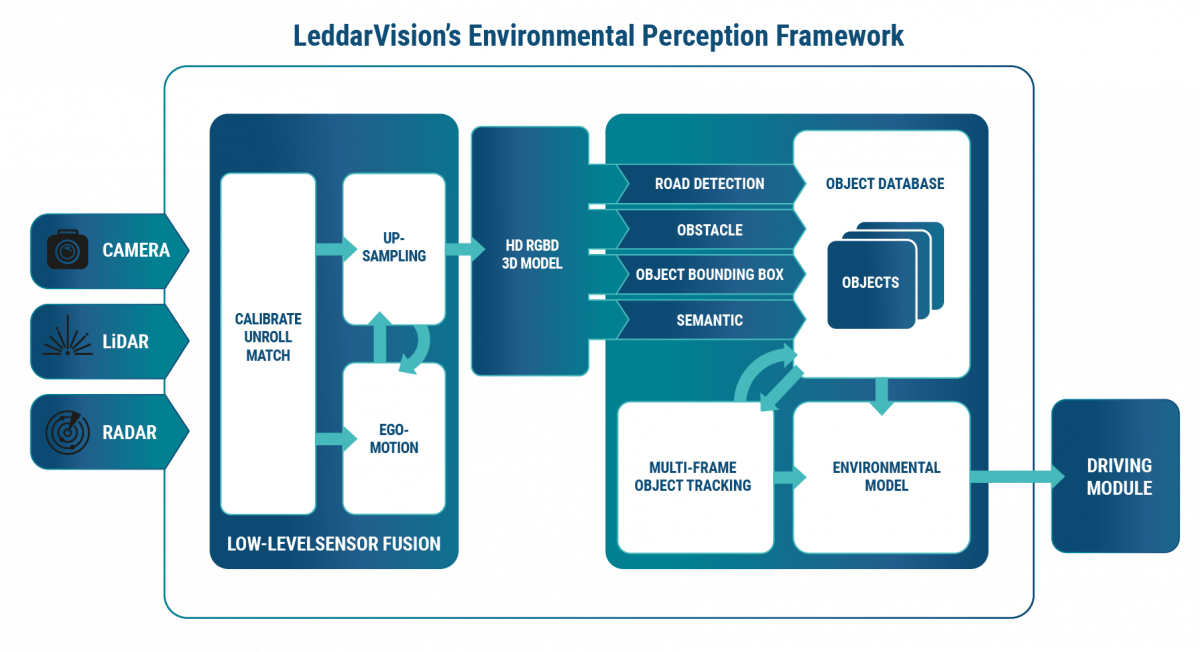

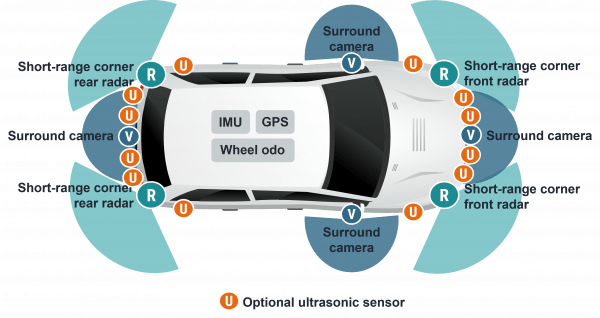

LeddarVision 软件基于 LeddarTech 全面而成熟的传感器数据底层融合技术,在底层处理传感器数据,从而有效地了解导航决策和更安全驾驶所需的车辆环境。LeddarVision 通过提供以下功能,解决了基于传统对象级融合的 ADAS 架构的许多局限性:

- 可扩展至 AD/HAD

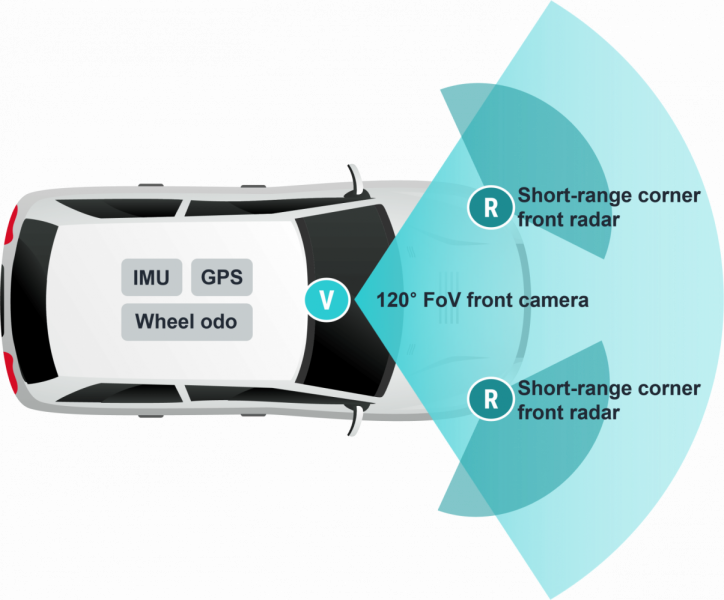

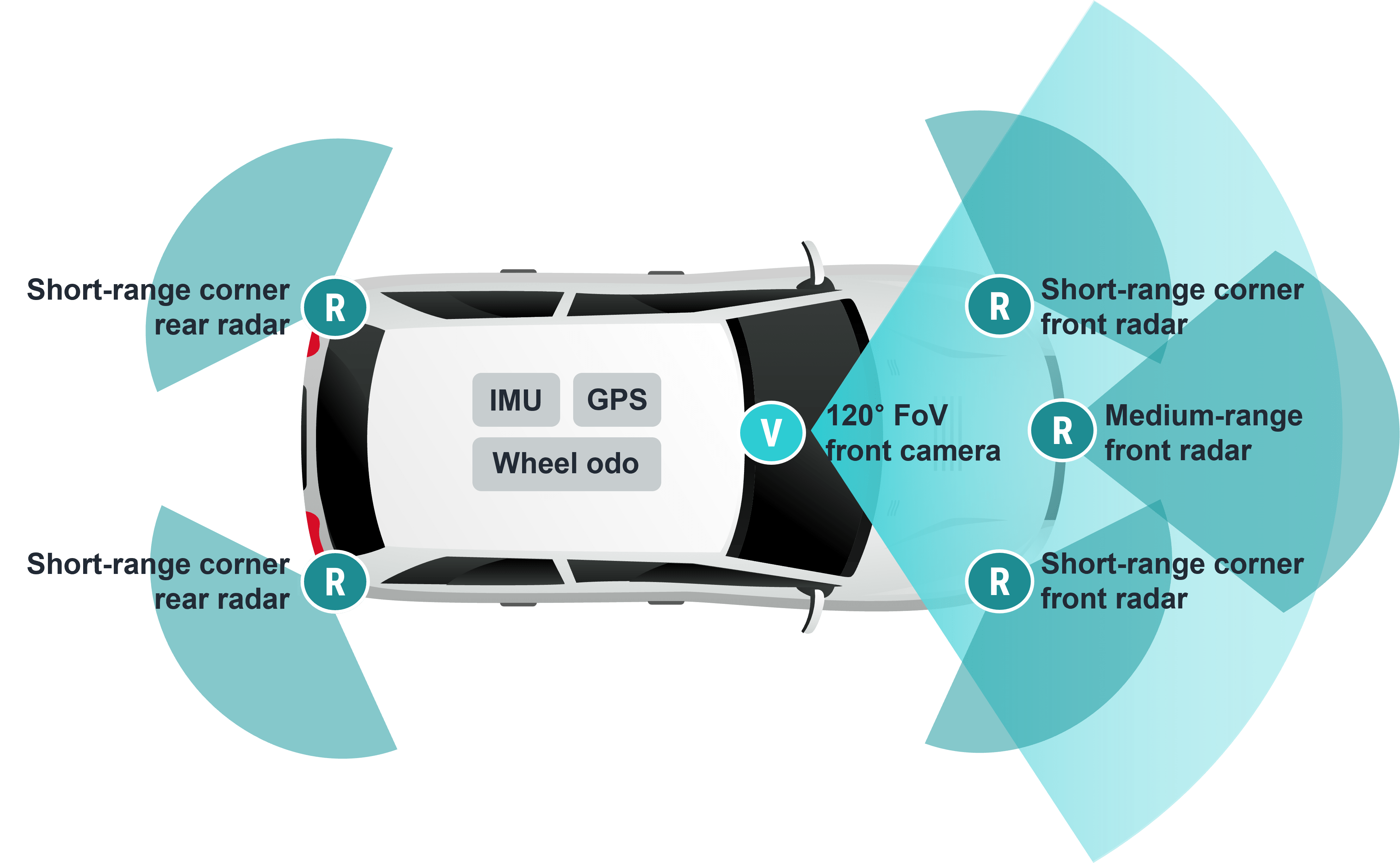

灵活的模块化设计,可有效处理日益增多的用例、功能和传感器集

集中式、独立于传感器的对象级融合,可优化融合所有传感器,从而实现更高更可靠的性能

底层传感器融合利用来自所有传感器的信息,以实现更好、更可靠的操作。因此,这种传感器数据低级融合和感知解决方案性能优越,在遮挡物体、物体分离、摄像头/雷达误报、刺眼光线(如太阳、隧道)或距离/方向估计等不利情况下,超越了物体级融合的限制。